It’s a Long Season

How my grandfather taught me to think like a statistician

Dr. Bobby Scott’s Statcast & Stethoscopes newsletter connects baseball and medicine better than anyone. If you want regular, clear, baseball-inspired insights to sharpen how you think, read him.

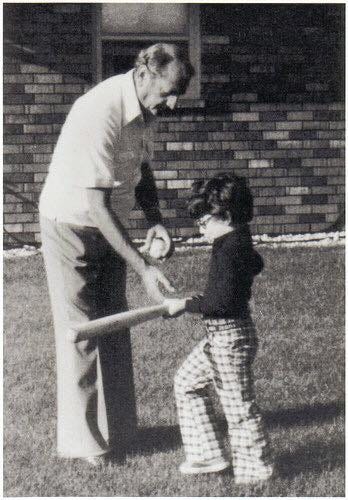

But I have a baseball story too. It starts with my grandfather Leo, who came to this country from a shtetl in Russia at age nine. He had little formal education, worked as a door-to-door salesman, read the newspaper every day, and loved baseball. He also taught me more statistics than I ever learned in a classroom.

In April 1987, the Milwaukee Brewers started their season by winning their first 13 games. The city was electric, and I did some math: If they played even .550 baseball the rest of the way, they would cruise to a divisional championship. I told my grandfather, “With thirteen wins in the bank, they just have to avoid a collapse and they’ll be in the AL championship!” My grandfather listened and said, “Jim-eleh, it’s a long season. The cream always rises to the top.”(1)

That sentence was Bayesian reasoning, even if neither of us knew the term at the time. In this case, the “base rate” - my grandfather’s prior probability - was based on a lifetime of listening to, watching, and reading about baseball. Also, the Brewers had been projected to finish in the middle of the division. A solid team - not terrible, but certainly not dominant. Thirteen games did not erase his prior expectation. My grandfather also understood regression to the mean: extreme performance over a short stretch tends to drift back toward underlying ability over a long time horizon. One hundred sixty-two games dilute variance, but two weeks amplify it.

That year, the Brewers finished 91-71, in 3rd place in the AL East. After the 13-0 start, they went 78-71. They finished third. Not champions, but not a collapse - they finished roughly where the preseason projections suggested.

My grandfather did not use statistical language, but he certainly thought that way. He taught me that new data do not replace prior knowledge - they modify it - and the weight you give them depends on how much data you truly have. He was protecting against over-updating based on short streaks.

I think about this when I read medical research studies or review research data. A drug intervention trial reports a dramatic relative risk reduction with early separation of curves and an impressive hazard ratio. Everyone is excited, buzzing on social media, and rushes to use the new medicine. The temptation is to treat the result as a new truth. But Bayesian thinking asks different questions. What were my priors before I read or heard about this study? How biologically plausible is the mechanism? How often do small studies, or ones that were terminated early, overestimate effect size? How long is the follow-up? How stable are the estimates? What is the pretest probability that the observed effect is real and durable?

The key point is that a study result or a clinical observation is not a heavenly revelation. It is an update. Small samples and unexpected findings should modify but not overwhelm strong priors.

I think about it in clinic too. A patient loses thirty pounds after a heart attack. His LDL cholesterol and triglycerides plummet. All of his numbers look pristine, and he feels great. He asks whether he can stop his cholesterol medicines. After four months of discipline, it feels decisive. But his base rate risk has not disappeared. He has established coronary artery disease. A short run of beautiful numbers does not rewrite his underlying biology. Four months is not four years. Furthermore, rapid weight loss can suppress lipids beyond the eventual steady state. Bayesian reasoning requires context and time. We update our estimates gradually and respect our priors. We play the long game.

There is another part of this lesson. It goes back to something I wrote in my very first Substack essay about predicting the future: we can’t do it. We can’t know whether a hot start is destiny or variance. We can’t know which promising therapy will hold up over a decade. We can’t know which plaque will rupture, or whether a patient with advanced heart disease will get cancer or die in an accident.

Medicine and medical research are exercises in humility in the face of profound uncertainty. We use statistical inference to help guide us. I am pretty certain that my grandfather never used the terms “inference” or “priors,” but he understood that thirteen games represent noise within a 162-game season.

“It’s a long season.”

PS. I will come back to my grandfather in a later post. His lessons did not end with baseball.

Footnote:

(1) The “-eleh” suffix is a Yiddish diminutive that indicates affection, warmth, and belonging, like “My Jimmy.”

What an excellent way to illustrate these principles!

I was expecting you to go full on Rolling Stones, "Time is on my side (yes, it is)." But in any case, your point is well taken. I think we are far too often pushed by the media science writers who promote studies as "breakthroughs" and "blockbusters," without the foresight necessary to properly assess the actual efficacy or long term value of a given medication or intervention and provide the appropriate caution. Perhaps a combination of Bobby Scott's fine SubStack & your always recommended Substack should do the trick!